Voices of Biotech

Podcast: MilliporeSigma says education vital to creating unbreakable chain for sustainability

MilliporeSigma discusses the importance of people, education, and the benefits of embracing discomfort to bolster sustainability efforts.

Benjamin Bayer developing soft

sensors in MATLAB software

Achieving the high process efficiencies and optimization of Manufacturing 4.0 will require sophisticated software systems, mathematical modeling, and on-line process monitoring. Soft sensors are valuable tools that enable users to measure process parameters in real time. I spoke with Benjamin Bayer, data scientist at Novasign GmbH and doctoral candidate at the University of Natural Resources and Life Sciences in Vienna, Austria, about the potential of soft sensors for bioprocessing and important considerations for their use.

Introduction

How would you describe soft sensors to people who are unfamiliar with them? The term soft sensor just abbreviates software and sensor. They are used for on-line process monitoring. Hardware measurement devices — typically invasive probes for temperature, pressure, and pH, but also noninvasive sensors such as those for turbidity, spectrometry, or off-gas analysis — all generate unspecific signals. The software part of a soft sensor translates those signals to specific concentrations of an indirectly measured parameter/process variable estimated on-line.

Published literature on soft sensors distinguishes between data- and model-driven types. What are the key differences between those two? Data-driven sensors — sometimes called black-box models — do not require process knowledge because they infer conclusions from data. They are regression models. You simply need on- and off-line data, then you perform a regression based on those data. Other algorithms also can be used. Partial least squares (PLS) is almost an industry standard now, and several linear and nonlinear regression models can be helpful.

Data-driven models are fast to implement, but they have huge drawbacks when applied to new process conditions that they were not trained on. So they lack the ability to extrapolate properly. Model-driven soft sensors can be based on mass or energy balances — on first-principle approaches. They incorporate process knowledge, and unlike data-driven models, they are a more suitable match for extrapolation. But of course, they also have drawbacks. For example, the biggest problem is that they assume a mechanistic trend. The applied mechanistic model is too simple to accurately describe the high complexity in bioprocesses. So the use of model-driven soft sensors in upstream processing is not widespread.

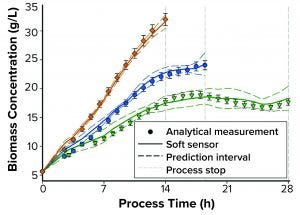

Figure 1: Comparing soft-sensor and analytical measurements of biomass concentration

Current and Future Applications

You are slated to deliver two presentations at the BioProcess International West conference in August 2020. One of those talks will focus on using soft sensors to determine biomass. Is that a parameter of primary interest in the industry? Biomass is a parameter of interest because it is very closely linked to product formation. But soft sensors also can be used for different indications: product concentration and media-component sensing, for instance. Whatever you are measuring off-line you afterward can measure on-line using soft sensors. Those analytical measurements are performed as the reference measurements for the model calibration.

As soon as you trust your mathematical model, you are ready to implement soft sensors. This is done by correctly mapping the parameters that were used for model building with the corresponding real-time variables. Often, a manufacturing execution system (MES) environment is used for that process. For example, if you use cultivation temperature in a model, then you need to link that parameter, telling the model what value from the MES corresponds to cultivation temperature. Then data are collected in your environment, and a soft sensor simply uses its algorithm and translates nonspecific on-line signals to a specific variable of interest.

At what stages of biopharmaceutical production can soft sensors be used? Soft sensors receive much interest at the production stage. Monitoring target variables of interest such as quality attributes in real time — or even multiple steps ahead — is of great value to biomanufacturers, especially considering the quality by design (QbD) initiative.

But a soft sensor can be applied during any stage, including process development. For example, by using a soft sensor to estimate biomass concentration, you can induce product formation or transfect cells always at a similar cell density, which increases reproducibility of experiments. With a suitable model structure and novel fermentation techniques, you could even accelerate process development. Using a suitable structure, you can develop models for continuous processes, taking into account material balances and dilution factors.

What about downstream applications? Downstream processing consists mainly of two unit operations: chromatography and filtration. With chromatography, the timespan in which different species elute from a column is quite different from the times used in upstream processing. In an upstream process, conditions of cells remain almost constant over several hours or days. In chromatography, different species elute from a column within seconds or minutes. So if you use data-driven soft sensors based on multiple inputs as indications about the state of a process, then you need high computational power, and you have limited time for decision-making. Although off-line analysis of different species is a standard procedure, sensing those species (based on spectroscopic information fed to a soft sensor) is difficult in real time. But powerful first-principle models can be used to describe elution behavior of proteins from chromatography columns (e.g., GoSilico software). Such models can be used as soft sensors.

However, the goal of real-time release in chromatography is of interest for industries. In this approach, impurities not only are sensed, but peak cutting decisions are based on such models. For cross-flow filtration, we are working on multistep-ahead models. You can think of those as predictive models. Such approach would assume that if certain process conditions (inputs) are continued with prevailing conditions, then filtration will be over in x-minutes. With a digital twin, you can change process conditions to change the duration of a process. That is an example of a powerful soft-sensor application in a downstream operation.

Upcoming BPI West Presentations |

Benjamin Bayer is scheduled to highlight the use of soft sensors in bioprocessing during his presentations at the BPI West conference on 10–13 August 2020 in Santa Clara, CA. I asked him about his goals for these presentations. “My first presentation will have three objectives. The first is to describe my team’s development of biomass soft sensors, which was based on standard process parameters and gathered by an advanced two-dimensional (2D) fluorescence probe. This part will be an overview of the entire workflow — from data gathering to application of on-line data to the algorithm itself for model training and model validation to test soft-sensor performance. The second objective is a focus on process understanding because in the end, that is what regulatory authorities demand — especially of 2D fluorescence, which is not new but has not been explored fully. Soft sensors introduce great risk for confusing correlation of parameters with causation. Of course, no one wants that. Soft sensors should be reliable and robust when applied to new data. So the need to understand a process and the underlying data will be a critical part of that first presentation. I will conclude with a look at how we can bridge the gap from current uses of soft sensors to advanced — or even predictive — process control. “Our early experiments yielded two key lessons for the implementation of a predictive soft sensor. The first was that we needed to change our model inputs because we were using 2D fluorescence data. You cannot control or influence spectrometric data. You can only read them. So if we wanted to move toward predictive control, we would need to include only controllable parameters in the model, such as cultivation temperature, and balances. We also needed to find a different model structure. We already did that and found a suitable structure to develop predictive soft sensors by using hybrid modeling. My second talk will be about that topic.” |

Implementation

How easily can companies integrate soft sensors into their information technology (IT) systems? And what technology infrastructures are needed to use soft sensors effectively? We use a common approach: After model building in MATLAB or our software, we export a model structure using a dynamic-link library (DLL) file. If the programming language of your modeling toolbox and the MES system are the same, you can simply import the DLL file within the MES environment and map the required variables. Thus, the primary concerns when implementing a soft sensor are data handling and code. Some soft sensors require low computation and communication power, whereas some have higher demands. The ones with low requirements should fit seamlessly into existing industrial automation systems. So they can be incorporated with little effort. But sensors with higher demands for calculations and data transfer would need independent implementation.

For Novasign and the University of Natural Resources and Life Sciences, integration of our soft sensors was easy. There was not much need for high computational power, so we were able to add soft sensors on our existing control system. So realizing a soft sensor in your current automation system is possible, but success will depend on your model’s calibration and the computational capability of your hardware. If your software needs to do only some basic calculations, that is not a problem. Otherwise, a soft sensor should be applied with separate hardware. If you need help, you can always contact us.

How might soft sensors get facilities closer to true QbD capabilities? I think that soft sensors will fit well into the QbD picture. Since the start of the QbD and process analytical technology (PAT) initiatives, soft sensors have seen a boost in their application and within the highly regulated biopharmaceutical industry. If you think about future applications, soft sensors have great potential. Once you have a predictive model built on controllable process parameters, you can start thinking about implementation of a model predictive-control strategy, which would be the goal in a QbD approach. If you always could access your desired process variables on-line and in real time — and thus adapt a process and counteract it if necessary — that would be the ideal state. Soft sensors are highly valuable tools and the first step toward such applications because they provide operators with information about the state of a process.

Implementation Considerations

What advice would you give to companies that are interested in applying soft sensors to their bioprocesses? Gaining acceptance from regulatory authorities poses a potential difficulty because not much is known about soft-sensor applications in the bioprocess industry. Also, soft sensors are available but not widely used now because of technological limitations relating to available on-line measurements and general lack of know how. Many advanced sensor systems are available, but they possess high error ranges with respect to their measurements. Analytic measurements need to be precise, and the average error must be known to develop accurate models.

If you do not understand the measurements of uncertainty that you are building your model on, then you cannot judge the predictions of that model. Even if soft sensors have much potential, you need to take great care and devote considerable experimental effort to constructing a good model. You want to think early about what you want to measure and then do it correctly. Otherwise, you might need to repeat experiments.

Soft sensors have endless applications, but you need to think about whether it makes sense to use them. This relates to the correlation-versus-causation problem I described above. For example, you can develop a soft sensor based on 2D fluorescence to estimate concentration of an analyte (e.g., glucose). Even though glucose itself is not fluorescent, you will get a model and well-performing estimations on your training data.

However, once that model is applied on new data, it will fail to deliver reliable estimations simply because lacks a causal link between the 2D fluorescence data and glucose concentration. If you want to, you can correlate almost everything. There are websites devoted to correlation studies that fit data nearly perfectly but don’t make sense. So researchers need to think about their setups and how to build reliable soft sensors based on the right process variables.

It is important to raise awareness about the potential of soft sensors and enhance some objectives for their use. That concerns both available hardware capabilities and software-sensor methodologies. Researchers need to make progress in those areas to go from simply soft-sensor monitoring to methods of advanced process control that are required by the US Food and Drug Administration. The bioprocess industry is heading in the right direction, but there is still much work in front of us.

Brian Gazaille is associate editor at BioProcess International; ([email protected]). Benjamin Bayer is a data scientist at Novasign GmbH and a doctoral candidate at the University of Natural Resources and Life Sciences, Vienna, Austria ([email protected]).

You May Also Like