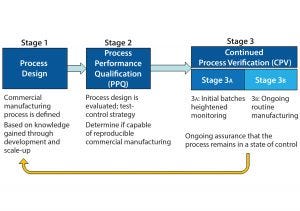

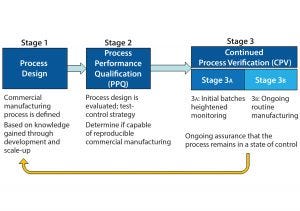

Figure 1: The three stages of process validation as defined by FDA’s 2011 guidance for industry on “Process Validation: General Principles and Practices”

As defined in the ICH Q10 guideline, a control strategy is “a planned set of controls, derived from current product and process understanding, that assures process performance and product quality” (1). Every biopharmaceutical manufacturing process has an associated control strategy.

FDA’s 2011 guidance for process validation (2) describes process validation activities in three stages (Figure 1). A primary goal of stage 1 is to establish a strategy for process control that ensures a commercial process consistently produces acceptable quality products. Biopharmaceutical development culminates in the commercial control strategy, a comprehensive package including analytical and process controls and procedures. Stage 2 process performance qualification (PPQ) is needed to establish scientific evidence that a process is reproducible and will consistently deliver high-quality products. Stage 3 of validation and continued process verification (CPV) provides an opportunity to improve process control through the lifecycle of a product. The 2011 FDA guidance clearly outlines the expectation for manufacturers to understand, detect, and control variation beyond development, throughout commercial manufacturing. This goal of CPV is a natural extension of control strategy development begun in stage 1.

The Chemistry, Manufacturing, and Controls (CMC) Strategy Forum (held 20–21 July 2015 in Washington, DC) focused on issues that arise for biopharmaceuticals during control strategy development and CPV, with special focus on the interface between

the control strategy and CPV. Topics included the following:

Identification and control of short-term and long-term variability

Business and quality systems needed to support CPV

Effective control strategies resulting from “enhanced development,” including CPV implications

Regulatory filings: what gets submitted as part of the biologics license application (BLA) and marketing authorization application (MAA) and what remains onsite for inspection

Application of CPV to legacy products

CPV for products approved under accelerated time frame (e.g., breakthrough therapies).

Session 1: Regulatory and Strategic Considerations

The first session on regulatory and strategic considerations for CPV began Monday morning, 20 July 2015, with a presentation by Emanuela Lacana from FDA-CDER titled “Control Strategy and Validation.” Lacana (associate director of biosimilar and regulatory policy) provided an overview of regulatory guidance related to control strategy (ICH Q6B, Q8, Q10, and Q11) and CPV (1). She emphasized that no individual element in a control strategy works alone. A control strategy reflects the totality of its elements and includes a combination of specifications, process design and development, raw material controls, facilities and equipment controls, in-process controls, and appropriate monitoring of relevant parameters and attributes. Establishing that a process is capable of consistently delivering quality product (i.e., has an effective control strategy) is initially documented in a validation exercise. Lacana noted that validation is a state — not an event.

In that context, ongoing monitoring and review performed as part of CPV assures that a process is continually in a state of control. CPV is not a substitute for inadequate process development. Lacana provided a cautionary example from a recent FDA preapproval inspection when it was observed that the batch record differed significantly from the manufacturing process description in the original biologics license application (BLA). The inspectors found that process changes were made almost daily to adjust multiple unit operations. The applicant’s justification that significant process changes were in keeping with the spirit of CPV was not accepted.

Lacana then provided an overview of the 2015 draft guidance for industry: Established Conditions: Reportable CMC Changes for Approved Drug and Biologic Products (3). This guidance aims to provide clarity as to which elements of the CMC information in a marketing application constitute an established condition (regulatory commitment) as well as those sections in a common technical document (CTD)-formatted application where such information would be provided. With respect to overall control strategy, the draft guidance lists elements that would be established conditions. Those include a description of the manufacturing process, process parameter ranges, in-process tests and specifications, and the container–closure system.

Jörg Gampfer (Baxalta) gave the second presentation in this session, “Principal Approach to CPV: Integration with Quality Systems and Operating Mechanisms.” He referenced a general roadmap for CPV document facilitated by the BioPhorum Operations Group (BPOG). Specifically, the case study was compiled in response to FDA’s 2011 process validation guidance (2) and provides recommendations on the content of the CPV protocol and rationale along the lines of the A-Mab case study.

Two phases of CPV were described: an initial short-term phase in which data from ~30 batches are accumulated to set statistical process control (SPC) alert limits, review parameters, and update risks; and a long-term phase in which SPC is used to understand variations and trends and identify opportunities for process improvements. The CPV protocol interacts with other operational mechanisms of the quality system, including the annual product review, change control, and other lifecycle management activities. Initial minimum requirements for a CPV plan are a prioritized subset of process outputs (e.g., critical quality attributes, CQAs). In this approach, CPV is an ongoing activity continuously verifying a manufacturing process, reacting to changes and identifying opportunities for improvement activities. A CPV program is distinct from an annual product review and can provide additional data that have the potential to facilitate process changes.

The third presentation in this session was “Pharmaceutical Product Life Cycle Management: Maintain the Validated State for Commercial Manufacturing Processes” from Andrew Chang (Novo Nordisk). He provided a detailed illustration of his company’s approach. Specifically, CPV is designed to meet three goals: maintain validated state of product, process, and system, enable continuous improvement, and meet regulatory requirement for lifecycle validation.

The company’s CPV strategy includes comprehensive review, documentation, and evaluation of the impact of changes (science-based, data driven). It is based on annual verification exercises (calendar cycle) for each product and production unit covering all validated facilities, equipment, and processes. A significant outcome of this exercise is a report called a validation status summary (VSS). Overall verification of the validated state includes inputs from short-term review of control charts and results from extended sampling per a post-PPQ protocol. A VSS report is written by appropriate staff (including subject-matter experts) and is provided to management as an important input to a quality management review.

Timothy Schofield (MedImmune) gave the final presentation in this session, “Line of Sight to Continued Process Verification.” Line of sight is a concept that emphasizes the need to include long-term planning and think strategically during product and process development.

Product development can be conceptualized as a series of mathematical functions. Product quality attributes (PQAs) are a function of the manufacturing process. Pharmacokinetics and pharmacodynamics (PK/PD) are functions of PQAs. Patient safety and product efficacy are functions of PK/ PD. Schofield provided examples to show how line of sight can facilitate process development by defining, building, and managing product quality.

Session One Panel Discussion

The presentations were followed by a roundtable of the speakers joined by Laura Durno (Health Canada). The group addressed several questions from the audience.

One question referenced the FDA guideline on expedited development programs. The document states, “FDA may exercise some flexibility on the type and extent of manufacturing information that is expected at the time of submission and approval for certain components (e.g., stability updates, validation strategies, inspection planning, manufacturing scale-up).” Can the FDA exercise flexibility for validation strategies for products not designated for expedited development? The FDA noted that there are no hard rules on what validation elements can be deferred from stage 2 (PPQ) to stage 3. Even products approved under an expedited review program need to provide sufficient data to show that the manufacturing process can produce quality product consistently.

Another question challenged the utility of the traditional requirement for three validation lots during stage 2 PPQ. In lieu of the traditional requirement, would it be possible to submit a CPV plan and have it assessed during the preapproval inspection (PAI)? The FDA labeled the three-lot requirement as a “negative experiment”: If you can’t do it, it says a lot; if you can, it doesn’t say much. Failing the ability to make three consecutive passing lots is highly telling about the state of validation and actual readiness for commercial production. The FDA needs data that show a process can do what it is purported to do at least three times in a row. With respect to validation submitted for review or inspection, reviewers need relevant data that support a process can function to make an intended complex biologically derived product. This is needed as a PAI for a new product and is not always performed. In any case, it is a short snapshot of site capabilities. Complex products and processes may require additional scrutiny that can occur during the review.

One attendee asked, “How much (if any) of the CPV can be preapproved for a sponsor to work within licensed products?” The FDA would see a control strategy defined and justified in a dossier but see the CPV postapproval internal plan upon inspection. Health Canada has seen a CPV plan with prospective decision-making steps in a dossier that was approved. Some strategic elements could be included in a dossier that could be discussed during a review cycle, depending on what details are specifically included for them to review.

An audience member asked whether there are situations in which you could make a change and notify agency after the fact. There are cases in which a prospective change plan can be approved in the dossier: e.g., dropping host-cell protein (HCP) or DNA after x number of lots are within specification limits. Such plans would be approved as a part of the product application.

Another audience member asked “Who is the intended audience for CPV plans? Is CPV an internal management tool or external regulatory document (either in a dossier or during inspection)?” Differences in regulatory histories of biotechnology companies at the meeting lead to different perspectives in response to this question. Not all companies agree that CPV must be a regulatory commitment, some want to keep it as internal best practices. Then they can use the outcomes to communicate to regulators plans for defined improvements (e.g., through comparability protocols).

Session Two: Preapproval Work in Support of Postapproval Validation Activities

Shawn Novick (Seattle Genetics Inc.) and JR Dobbins (Eli Lilly and Company) cochaired the second session on Monday afternoon, 20 July 2015. The presentations focused on how development of a commercial control strategy links to an initial PPQ exercise. The control strategy ultimately sets the foundation for the CPV throughout the commercial lifecycle of a product.

Ciaran Brady (Eli Lilly and Company) presented on the design of a process qualification and CPV program within an enhanced development framework. The presentation focused on a three-staged approach of process characterization, process validation, and continuous process control (verification). During process characterization, platform and product knowledge coupled with structured risks assessments are used to direct process and control strategy development. The resulting enhanced development program leads to a well-understood, holistic, and robust control strategy that is foundational to successful validation and maintenance of a process over a product’s lifecycle.

The overall PPQ would include three to five validation batches along with other activities (e.g., resin reuse and reprocessing protocols). The CPV plan is captured in a prospective protocol and includes real-time assessment of process performance with annual product reviews. Brady stressed the importance of the availability of electronic data collation and analysis systems for effective data reviews. Integration of a CPV plan with a quality management system is important to ensure that a process remains in a validated state as well as to identify and implement continuous improvement opportunities.

Eliana Clarke (Biogen) presented on process validation for biologics manufacturing with process analytical technology (PAT) and real-time release testing. She provided an overview of Biogen’s approach to “next-generation manufacturing processes” based on advanced process controls (APC). Eliana stressed that “process validation is not an event, it is a state.”

Clarke’s presentation stressed the importance of developing a clear connection of process parameters to product quality attributes. Models must be able to predict product quality, and companies should establish process signatures indicative of product quality and use of on-line measurements and controls. She discussed APC, including application to a bioreactor unit operation, which involved real-time monitoring and adjustment of feed media and glucose and lactate levels to achieve consistent process performance and product quality. Incorporation of APC elements throughout a manufacturing process moves away from producing distinct process validation batches (an event) and moves toward continuous monitoring of batches, which enables CPV (a state).

Session Three Practical and Statistical Considerations

Mark DiMartino (Amgen, Inc.) began the third session Tuesday afternoon, 21 July 2015, on “Practical and Statistical Considerations.” The presentation focused on the practical difference between process capability (Cpk) and process performance (Ppk) values. DiMartino showed through instructive graphics the relationship among the product distribution (mean and standard deviation), the product specifications, and Cpk/Ppk.

Ppk is grounded in the principle that when the three standard deviation limits on the process (mean ± 3SD) falls completely within the specification, Ppk is ≥1.0. More important, Ppk ≥ 1.0 ensures that the estimated out of specification (OOS) rate is ≤2,700 ppm. The two measures diverge when a process is not in a state of statistical control (e.g., is subject to “special cause variation” from some circumstance that is not inherent in the process).

Cpk provides an estimate of potential process performance in the absence of special-cause variation. It is calculated using short-term variability representing common cause variation. Ppk provides an estimate of actual process performance and uses long-term variability, which includes variation due to special causes. A special consideration is “long-term common cause variation.” Examples come from campaign-based manufacturing, in which a control chart may appear to have special cause variation, but this is generally tolerated in manufacturing. When understood and used correctly Cpk and Ppk are valuable tools for assessing how a parameter is performing compared with a specification.

In his presentation “Process Monitoring Applying QbD Principles in a Biopharmaceutical Environment,” Michael Kraus (Baxalta) began by illustrating why Shewhart control charts might not always be the right approach for managing quality. His main premise was that use of these charts is reactive process control, whereas the biopharmaceutical industry should move to preventive process control. In addition, use of these charts has model assumptions, one of which is normality of data, which is unlikely to hold because of the complexities of our processes and the limitations of our analytics.

Kraus made a case for changing the objective of monitoring from managing statistical signals to managing relevant events. This leads to linking process monitoring to potential patient risks. He proposed that specification limits set by quality parameters (QPs) be supported by risk limits based on process variation as well as control limits based on statistical limits. Kraus completed his talk by sharing a vision for using those limits together with a harmonized global strategy for site process monitoring, regular review, and a stepwise event triage and escalation system. The end product is process monitoring using process knowledge, risk evaluation, and statistics for continuous improvement.

Brian Nunnally (Biogen) gave the third presentation in this session titled, “Continued Process Verification: Practical Automated Control Charting Tools for Quality Control and Manufacturing.” He began by stating that manufacturers’ expectations are clearly defined in the 2011 FDA Process Validation guidance (2): Understand the sources of variation, detect its presence and degree of impact, and control it in a manner commensurate with the risk it represents to a process and product. Automation of this effort will result in better control and better application of resources toward understanding and improving bioprocesses. However, implementation will depend on continued improvements in technology, the ability to adjust the sensitivity of the reporting (balancing creating “lots of shiny objects” with not “falling asleep at the wheel”), and a dedication to continuous improvement by all stakeholders. Applications in quality control are legion, including monitoring of controls, release testing, assay performance through invalid assay rates, and stability trends. How that is ultimately conducted to allow for optimization of change control implementation and regulatory reporting is something worthy of additional discussion.

Bernhard Pasenow-Grün (GSK Vaccines) gave the session’s final presentation, which was titled “Novel Data Analysis Method for Continued Process Verification Using ChangePoint Analysis.” He presented on CPV implementation for his company’s vaccines manufactured in Marburg, Germany and Siena, Italy. The program started with considering mainly output variables (release parameters) and yields for legacy products (CPV phase 1). The next step is to include process inputs (e.g., in process controls and raw materials) (CPV phase 2). Parameters to be included in CPV phase 2 are based on a risk assessment. GSK uses an automated process of data collection and data analysis, followed with periodic review by the company’s cross-functional team from production, technical services, quality, and biostatistics.

Key to their data analysis is a novel tool for detecting systematic shifts in the process mean. A “change point” is determined using random permutations a given data to identify an optimal change point. This proceeds recursively to successive segments of the data until no further changes are detected. The recursive algorithm incorporates assessment of normality of the residuals until it has achieved the optimal combination of data scale and change points.

Pasenow-Grün used annotated graphics to show identification of out-of-expectation (OoE) values and change points, together with use of process capability scores (Cpk and Ppk), which the cross-function team used to assess the state of control. The graphics not only showed the shifts and resulting process capability, but also how introduction of raw material lots can be included using color coding. This combined approach of change-point analysis, process capability analysis, and control charts allows manufacturers to distinguish between systematic changes (e.g., those due to change of raw material) and “single events” (e.g., those due to an operator failure). The approach also enables improved process understanding. Finally Pasenow-Grün previewed a unique graphic display called rising sun plots, which GSK is exploring to facilitate assessment and review of their production.

Session Three Panel Discussion

The session was followed by a panel discussion in which the speakers were joined by Julia O’Neill (Tunnell Consulting), Martha Rogers (AbbVie), and Meiyu Shen (CDER, FDA). Much of the discussion focused on the correct use of statistical methods as tools to implement CPV, the systems required to accomplish that, and the acumen of those who are interpreting their output.

All speakers come from organizations that are invested in sound strategic and technical solutions and either partner closely with their statistics departments or have a strong understanding of statistics. Nonetheless, some regulators have observed that some companies use statistics inappropriately, leading to incorrect decisions about process performance. It was noted that more publications on the appropriate use of statistics for CPV might help. An appropriate solution is neither technical nor statistical alone, but a combination of technical understandings. It requires implementing a process with defined goals and applying statistical tools that best address process events and achieves those goals with minimal risk.

Successful partnership between technicians and statisticians depends on clear communication. Technicians should learn how to describe the goals of data assessment to statisticians in a manner that statisticians can translate into reliable analysis. And statisticians should learn to communicate results of complex statistical evaluations to technicians so that technicians can make a correct decision. There was unanimous agreement that well-designed graphics are a valuable way to communicate complex statistical results.

The panel noted that equipment used (both automated and manual) should be compliant in data handling, and processing software should be equally compliant. The level of regulatory rigor can depend on the use of a given system. Systems used to make business decisions can require less rigor than systems used in a good manufacturing practice (GMP) environment. FDA stipulated that any part of the system that is in the current GMP (CGMP) environment would require a 21 CFR Part 11 level of compliance.

A key point made in this and other sessions was the need to distinguish common cause, special cause, and long-term common-cause variability. Many biopharmaceutical processes have long-term common-cause variation, which can be mistaken for special-cause variation. That is usually a result of campaign effects, in which different sources of raw materials or equipment may be used from campaign to campaign. Usual SPC rules are sensitive to such effects. The panel discussed an alternative approach of monitoring campaigns and lots within campaigns differently.

The affect of campaigns further complicates the interpretation of PPQ. It is usually a single campaign in the scheme of long-term manufacture, and may be a weak marker of long-term process capability. That places a greater onus on CPV to help in the understanding and management of process variability.

In an earlier session an analogy had been made to bioanalytical method validation, where a preliminary study is performed on proxy clinical samples, which is followed with a plan to evaluate “in-study” samples (real clinical samples). In such cases, PPQ is the preliminary study of the process, and CPV is the plan to continue the study during commercial manufacture.

The panel emphasized two types of risk in performance monitoring. The first is the risk of missing a meaningful production event, which is related to the sensitivity of the system. The second is the risk of false alarms. Such risks arise from many factors, including use of appropriate statistical approaches to detect process events. Another factor is “overcontrol,” which leads to excess false alarms because of statistical multiplicity (increased risk of one or more false alarms with an increase in the number parameters monitored) and correlations among process parameters. The latter can be managed using multivariate analysis.

Finally, the panel touched on a foundational issue: specifications. Current practices in calculating specifications from manufacturing data place a burden on developing a scientifically sound and risk-based CPV plan. Doing so convolutes the interpretation of a CPV event with conformance to specifications. Specifications (as defined in ICH Q6A) are the platform of tests and their acceptance criteria that help ensure the safety and efficacy of a commercial product. A CPV plan uses limits calculated from knowledge about the sources of process variability together with process capability indices such as Ppk to alert a manufacturer of a process event. When specification acceptance criteria are calculated from manufacturing data, an OoS is the same as out of trend (OoT), thereby rendering Ppk less useful in managing process performance. Future discussion of practical and statistical approaches to CPV should address the foundational issue of specification.

Session Four: Application to Legacy Products

The CMC Strategy Forum concluded on the afternoon of Tuesday, 21 July 2015, with a session on the application of CPV to legacy products. Anthony Lubiniecki (Janssen Pharmaceutical R&D) and Julia O’Neill (Tunnell Consulting) cochaired the session.

In the context of CPV, legacy products are those already in commercial production before the January 2011 publication of the FDA guidance on process validation (1). Legacy products present special challenges for CPV, because validation may have been completed without the heightened attention now paid to understanding sources of variability during development of a control strategy. CPV can provide an opportunity to gain a deeper understanding of the variability natural to such older manufacturing processes as well as potential impacts of process variation on product quality attributes.

The three session speakers introduced some valuable and innovative approaches to CPV for legacy products. These included updates on the BPOG collaboration on CPV and connections between the FDA draft guidance on established conditions (3) and CPV. All presenters emphasized long-term variation as part of the natural common-cause system for biopharmaceutical manufacturing.

Thomas Mistretta (Amgen) presented on “Use of Bayes Statistics and Cross-Product Performance Variance Data to Further Inform Product-Specific Process Control Limits.” Amgen uses the Bayes statistical approach to estimate sources of variation experienced at commercial scale, with the goal of establishing and updating realistic limits on process performance. Small-scale process development studies and even early process validation runs often have limited exposure to long-term sources of variation such as fluctuations in the characteristics of key raw materials. That is especially problematic for biopharmaceutical manufacturing, in which raw materials can be of natural origin. Mistretta said his company is well positioned to incorporate information on variation sources across common technology platforms producing multiple products. Combining cross-product platform data using Bayes statistical approaches enables the ongoing updating of parameter control limits.

Heather Eurenius (Merck) discussed a case study during her presentation “Using Legacy Product Data to Inform and Improve Control.” Merck’s CPV program provides a mechanism to drive investment in process understanding and control. For some legacy products, developed long before the quality by design (QbD) framework was routine, inherent process variation may not be accounted for fully in process design. Thus process performance variability and product yield variability often are attributed to within-specification execution and raw materials variability. That can extend to the impact of analytical method variation on process performance. Eurenius’s case study included an example of the effect of process measurement system variability on total process performance. In Merck’s program, non-CQA and non-CPP (critical process parameter) data are essential for problem solving and achieving a deeper level of process understanding. Data collection and management infrastructure is an important enabler for long-term process success.

The last talk of the session (and of the forum) was “A Case Study of Continued Process Verification and Life Cycle Approach for a WellCharacterized Insulin Analog,” presented by Warren MacKellar (Eli Lilly). Lilly manufactures the oldest biologic (insulin), for which a CPV program plays an important role in assessing what is normal process performance and what is not. Early process development for such an old product cannot be fully comprehensive. New sources of variability such as improved biological raw materials must be evaluated over the lifecycle of manufacturing.

Session Four Panel Discussion

Session panelists included the speakers and Marcus Boyer (Bristol-Myers Squibb), Steven Fong (FDA CDER), and Ellen Huang (FDA CBER).

For legacy products, stage 2 of validation (PPQ) may provide little understanding of the sources of variation experienced during commercial manufacturing. CPV can be a useful mechanism for ongoing learning about process performance and the impact of process variability on product CQAs. Not surprising, the most frequently reported source of unexpected variation after PPQ for commercial products is raw materials. The variability inherent to test methods for biopharmaceutical processes is also a common source of variability throughout commercial manufacturing.

During lively discussions of the current validation paradigm, some regulators said that testing only three lots during PPQ “is a canary in the coal mine” or a “negative experiment.” If a process cannot be run reproducibly for just three lots, improvements to its control strategy may be needed. If the PPQ lots do run reproducibly, then further demonstration of long-term consistency is still needed. Failing the ability to make three consecutive passing lots is highly telling about the state of process control and the actual readiness for commercial production.

There was further discussion about the concept of incorporating the three (or more) lots for PPQ into CPV, and making the CPV the only monitoring element required. The CPV program and lot performance then would be assessed during PAI. PPQ could be a special case of showing comparability between a development process and a commercial process much like there will be comparability exercises throughout the commercial lifecycle.

However, the FDA needs data that show the process can do what it is purported to do before approval. No one can review and approve a process without having relevant data demonstrating the process can function to make the intended complex biologically derived product. The FDA does not always conduct PAI for a new product and does not always look at the entire process control plan at the level a reviewer looks at PPQ in a dossier. PAI is a very short snapshot of site capabilities that does not allow time to review a CPV plan in depth.

Another topic of discussion was whether legacy control strategies should be updated with findings from CPV. Typically, adding new control elements is welcomed by regulators, but removing an approved control element may require extensive data to justify the change and support the (lack of) impact to a product. In some cases the FDA could approve the removal of a parameter if the data are abundant and show good history of control. Control points also may be moved upstream or downstream (e.g., to raw materials) to maintain process consistency more effectively.

Unexpected CPP variation that is detected during CPV does not necessarily cross over to quality systems. If the variation causes a CQA specification failure, that triggers an immediate quality system event. However, an excursion or trend in a CPP requires evaluation to understand the potential impact on CQA performance. For example, a one-time trend in a CPP, far from its limits, can be evaluated and explained with the CPV program, without entering the quality system formally. Other more persistent patterns of CPP issues may need to be escalated to quality systems. The BPOG CPV team is working on a paper addressing best practices for responding to statistical signals observed during CPV.

A key area of discussion with the FDA was on how to handle newly discovered entities that were previously present in legacy products. Newly discovered species revealed by more sensitive or specific analytical methods are possible. So sponsors should collect data, assess risks, and engage in discussions with regulators on appropriate paths forward with existing or adjusted specifications. The FDA does not want to penalize sponsors for upgrading methods.

References

1 Guidance for Industry: Q10 Pharmaceutical Quality System. US Food and Drug Administration: Rockville, MD, April 2009; www.fda.gov/downloads/Drugs/Guidances/ucm073517.pdf.

2 Guidance for Industry: Process Validation — General Principles and Practices. US Food and Drug Administration: Rockville, MD, January 2011; www.fda.gov/downloads/Drugs/…/Guidances/UCM070336.pdf.

3 Guidance for Industry (Draft): Established Conditions: Reportable CMC Changes for Approved Drug and Biologic Products. US Food and Drug Administration: Rockville, MD, May 2015; www.fda.gov/downloads/drugs/guidancecomplianceregulatory information/guidances/ucm448638.pdf.

JR Dobbins is research advisor, global regulatory affairs, CMC Biotechnology at Eli Lilly and Company. Stefanie Pluschkell is BTxPS executive director, business strategy and operations, at Pfizer Biotech. Timothy Schofield is senior advisor, technical research and development, at GlaxoSmithKline. Philip Krause is deputy director, OVRR, at CBER, FDA. Julia O’Neill is principal at Tunnell Consulting. Patrick Swann is senior director, CMC regulatory affairs, at Biogen. Joel Welch is lead chemist at CDER, FDA.